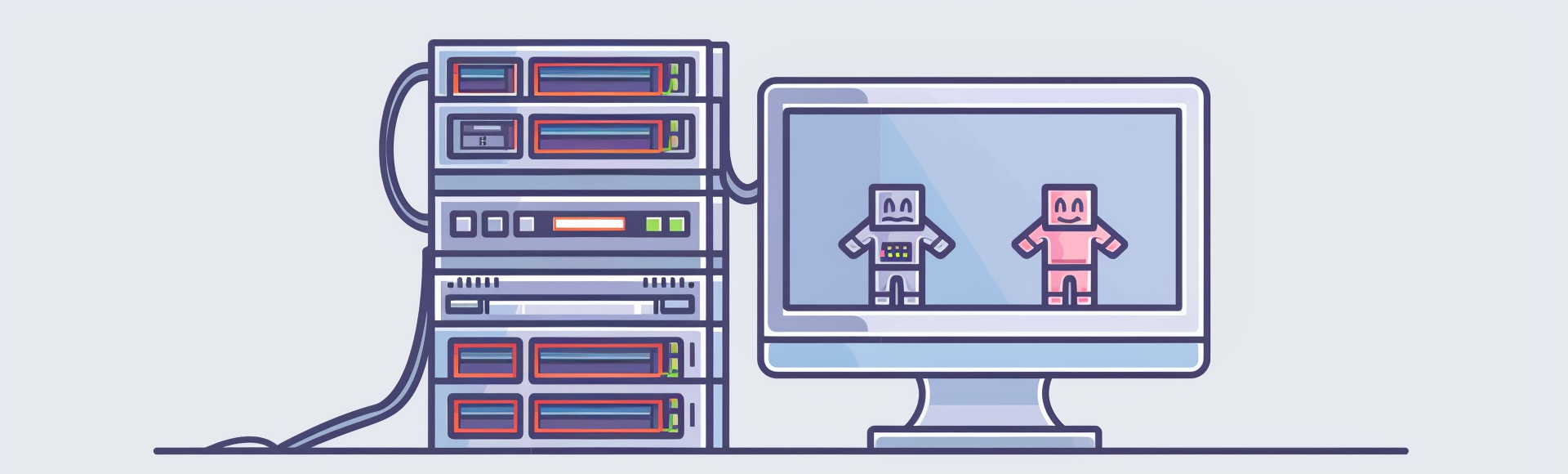

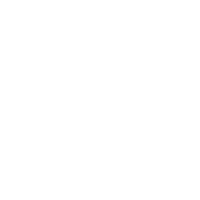

We have been hosting games for twenty years now. Twenty years of connecting players all around the world with the help of a dedicated server structure. However, it should come to no surprise that there is another common and well known alternative for online multiplayer in the form of Peer-to-Peer.

Peer-to-Peer, or P2P, directly connects players with each other, cutting the centralized server or “middleman” from the network. While we are always raising our voice in praise of the countless possibilities a centralized server brings to the table, it does make sense to compare P2P and dedicated servers. We’re starting with a brief history lesson about P2P, followed up with real use cases, in other words games, and examine the advantages and possible disadvantages of a Peer-to-Peer network structure.

At the end of the article you hopefully got a good understanding of why and when development teams choose one or the other. The goal is not to simply advertise the products we’re offering, as this is better done via shared user experience. Instead, having all the information at hand, it should be much easier to decide which network structure fits best into your next online multiplayer project.

The original idea of this article was to solely discuss Peer-to-Peer and dedicated server networks within the scope of video games. However, multiple factors outside the gaming industry were ─ and still are ─ the driving forces behind the technological advancements of P2P and as such, it’s only reasonable to take a look at the big picture.

Although P2P gained massive popularity in 1999 due to file sharing services like Napster and BitTorrent, it has been around since the late 1960s. The UCLA, Stanford Research Institute, UC Santa Barbara and the University of Utah used a network system that treated everyone as equal peers, without the help of a client/server format. The network was called ARPANET and is widely seen as the predecessor to the internet.

Shortly after, in 1979, followed the launch of the Usenet, or Unix User Network, as a free alternative to the aforementioned ARPANET. The Unix-to-Unix copy protocol made it possible for one Unix machine to dial another, exchange a file and disconnect afterwards, which essentially makes the Usenet the first precursor to a P2P network. It is still actively maintained and used today, offering a text heavy substitute to the world wide web.

Two key elements gave P2P a big boost around the years 1990 and following. One was the initial spark of the internet; the other was the creation of video games with added multiplayer. One prominent example was Doom in 1993. IDs poster child for generations of shooters directly connected each player without a centralized server in between. This was the fastest solution and meant that a potential server couldn’t become a bottleneck, although this gave way to new issues we’re going to speak about in later parts of the article.

Other games like Warcraft II did seemingly use P2P, as players felt like they were directly connecting to each other, with the help of Kali. However, this was essentially untrue, as Kali instead acted as a central server hub where players could connect and play with each other. Popular games that also used P2P were Duke Nukem 3D, Star Craft and Age of Empires, the latter made use of DirectPlay, a networking api developed by Microsoft, which allowed users to create sessions, chats, scores and other features.

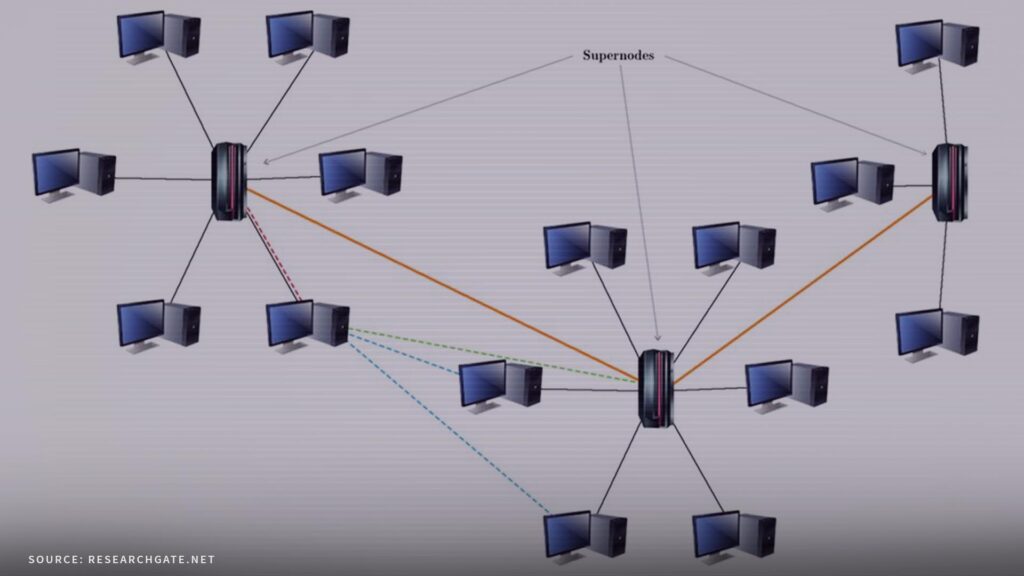

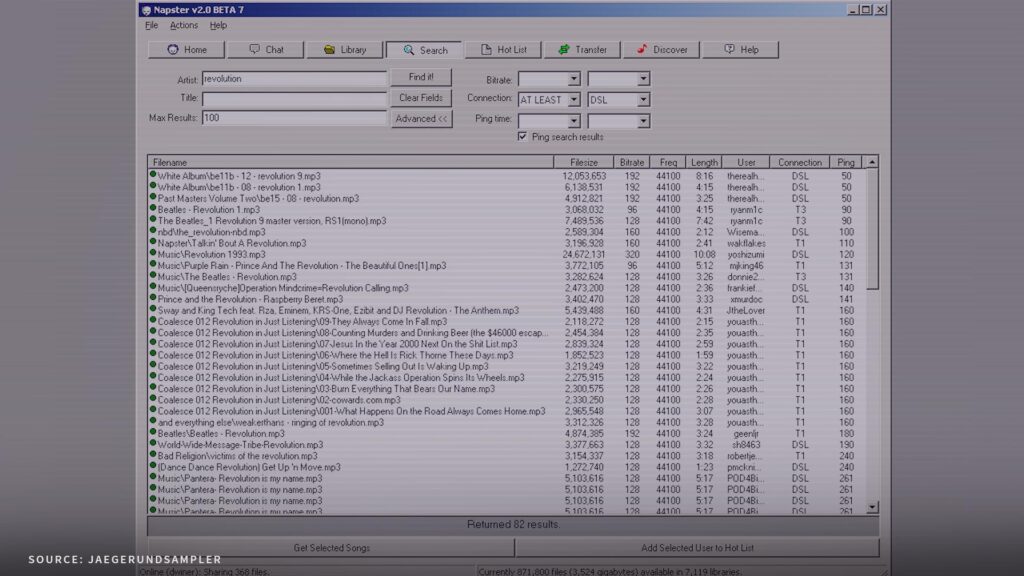

In 1999, Peer-to-Peer gained immense public attention with the launch of the file-sharing service Napster. The service allowed users to share music files with each other over the internet without having to pay for them, which unfortunately spawned a massive controversy regarding copyright infringements. 2001, only two years after rising to its immense heights in popularity, came the unfortunate downfall and shutdown, when a legal court ruled that copyright laws were violated.

However, Napster spawned a couple of other popular ─ or at least notable ─ file-sharing services. BitTorrent was and still is used for any kind of file-sharing, including music, videos and games. Founded in 2001, it became known for its fast file distribution that relies on the strengths of Peer-to-Peer. Any user downloading a file acts as a source for other users loading the same file. Freenet is another good example for a (decentralized) P2P network.

Released in 2000, it can be used for file-sharing but is mostly known for its unique system of encryption and routing. Each shared file is split up and encrypted in different places across the network, so that not even the uploader knows where the file is stored. This essentially means that Freenet, although regularly seen as part of the Darknet and its illicit activities, is instead used as a safe haven for privacy and confidential information, including whistleblowing.

Apart from online swap shops and communicative networks, P2P was of course implemented more often into video games as well. The list of use cases is endless and often includes games which would later fall back to P2P-hybrids or pure dedicated servers, but even Call of Duty heavily took advantage of Peer-to-Peer in the early years. Other notable titles that, in some way, used Peer-to-Peer are Diablo 2, Warcraft 3, Halo: Combat Evolved or Super Smash Bros. Brawl.

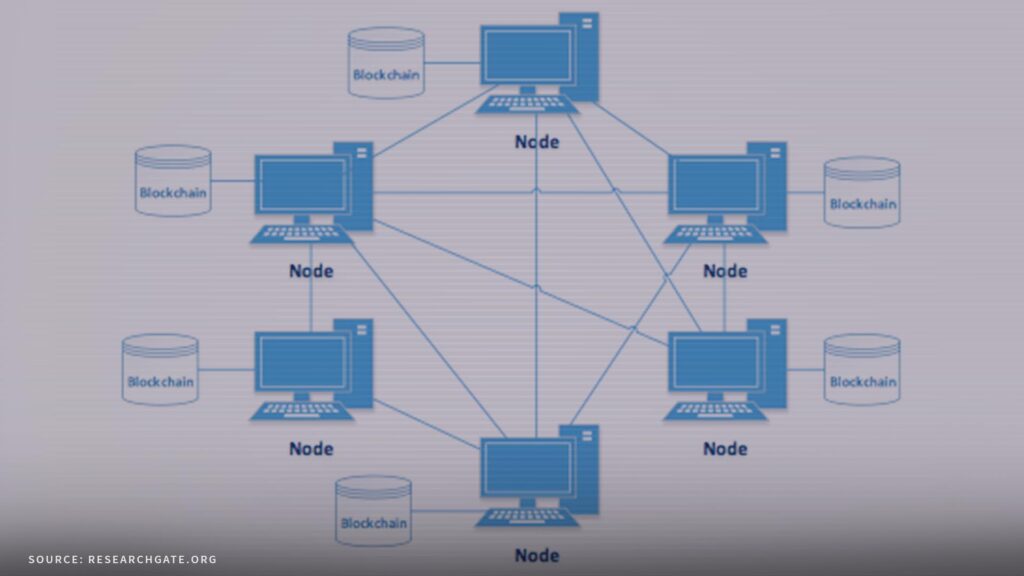

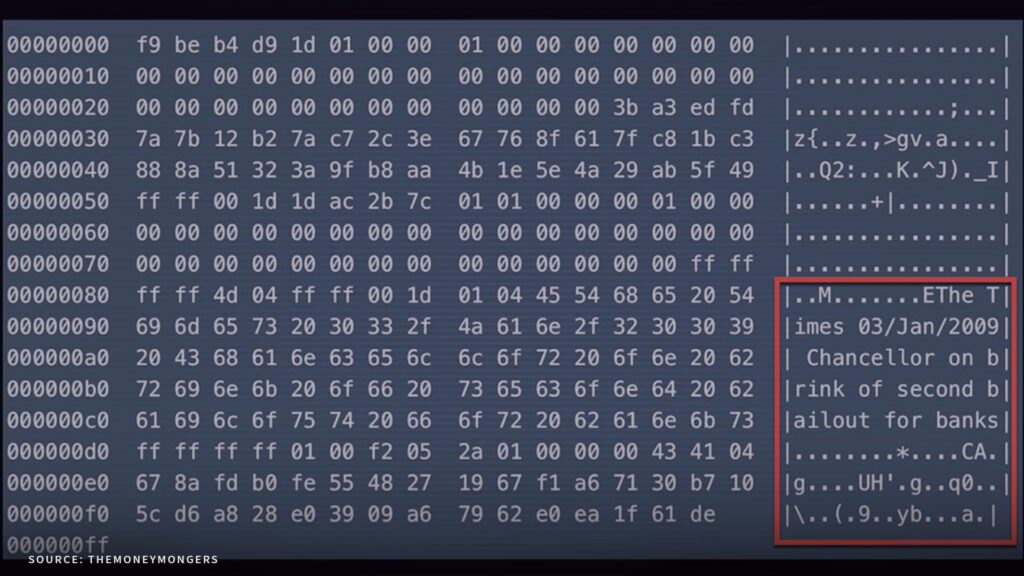

The next step in the evolution of P2P happened in 2009, with the introduction of Bitcoin and a word that would be used by many, despite the inability to properly explain how it works or functions: the blockchain. By now it’s nearly impossible to count the new crypto currencies that flush the market every day, not to mention the overall clutter of news and headlines focusing on the rise and fall of anything that can be remotely called a blockchain byproduct.

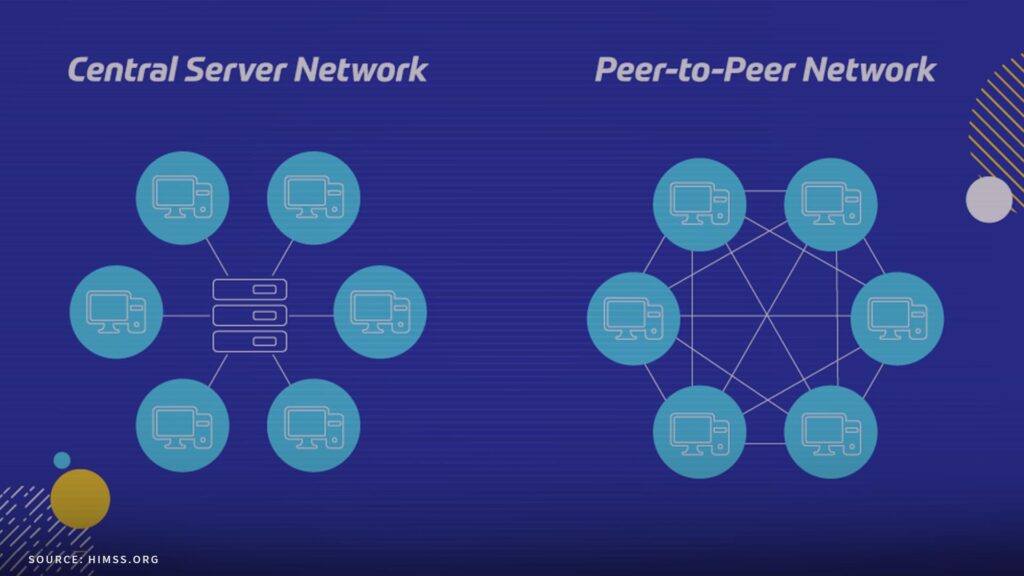

Back then, it was nothing short of a revolution. Based on a Peer-to-Peer network, the blockchain is essentially a distributed database that is managed by a network of nodes, with each node having a copy of the entire blockchain. A node is basically a computer, connected to the network. The Peer-to-Peer architecture is a key feature of the blockchain and enables the network to operate in a decentralized, transparent, and secure manner.

The more recent years brought a wide array of changes in- and outside of video games. P2P-technology has been used for more file-sharing services like eMule, inspiring the rise of Peer-to-Peer platforms like AirBnb and apps we use on a regular basis, like WhatsApp or Signal. Video games, confronted with public outcry about potential lag and latency issues, came up with a possible solution called hybrid P2P.

Remember, a Peer-to-Peer network relies on each player’s computer ─ or console ─ acting as both a client and a server, while dedicated servers have a separate server computer. With hybrid P2P, a dedicated server is used to host matchmaking and game sessions, while the actual gameplay runs on a Peer-to-Peer network. The question of why this matters moves us directly into the discussion about the advantages and disadvantages of P2P, when compared to dedicated server structures.

In a Peer-to-Peer network, the data doesn’t have to travel to a central server before it moves on to the next peer. This can be especially helpful if you have a great connection but the next data center is literally on the other side of the continent. However, scaling the P2P network, like adding more players into the pool, results in additional strain on everyone’s computer, as all of them now have to process increased numbers of data packages.

And, even if you have the best possible internet connection, you might still get the feeling that the overall performance is not good. Reasons for this could be that either the actual host of the session has a meager bandwidth or his machine is not powerful enough to handle his game, everybody else’s and their stream of incoming and outgoing data. That said, it is possible that a connection via Peer-to-Peer might end up being faster, if the amount of players is small and clustered.

This is why many 1vs1 games like Street Fighter V use Peer-to-Peer: a dedicated server would actually add another wall of latency. However, scale things up and you might find yourself troubled with lag spikes and unresponsive gameplay. This “scalability” is another point that speaks in favor of P2P, as the network can handle a rapid increase of user numbers very quickly, without the need to add another server. Also, every new machine adds computing power which could translate into faster file transfer.

Issues regarding poor connections and inconsistent gameplay of individual players will stay the same. The routing of the data streams require powerful algorithms which have to keep up with the rising number of participants. Finding a thread online that complains about bad netcode isn’t that hard but it rarely takes into account if there was a tipping point that made a game, a match or a session feel increasingly worse. The scalability ends up being a double-edged sword, offering many points for and against P2P network structures.

A dedicated server structure eliminates many of the aforementioned issues by putting a centralised server in charge. A server with the single purpose of running a hosted game is a source of reliably good and fair gameplay experiences, as it undercuts any advantages a powerful computer or a faster internet connection might give to single individuals. Granted though, servers have to be set up and cost money upfront, which gives P2P an initial headstart regarding the expenditure.

This can decrease development cost and therefore the price of full games, leading to P2P being overall much cheaper than dedicated servers. However, this is somewhat based on false assumptions. If, for example, GPORTAL hosts a server for your game, any cost involved comes down to a monthly fee, paid by the player of the game. In almost any case, there are at least two or three people who share the cost of a server, sometimes twenty or more. It’s easy to see how this makes it affordable for everyone.

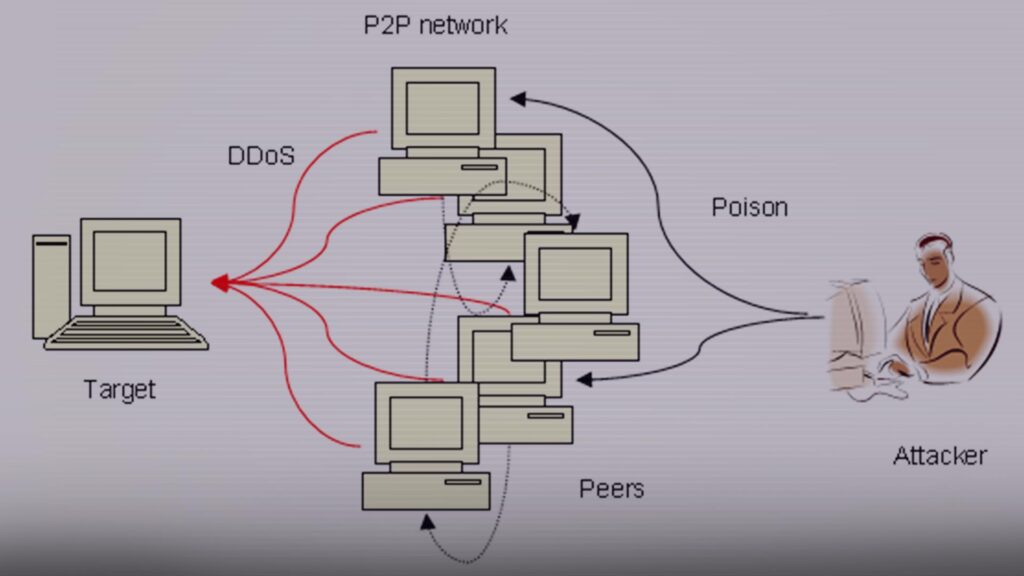

Using a dedicated server with encryption also leads to more safety because the way P2P works ─ directly connecting players ─ makes it very vulnerable to outside attacks and cheating. This leads to player frustration and could potentially mean the loss of a playerbase. At this point, instead of spending less money, you are not earning anything at all. However, this is the worst case scenario and rarely reflects real world circumstances.

Peer-to-Peer is powerful and a great way to connect players with each other, if a studio or developer thought about the scope and longevity of a game beforehand. Games that were designed with dedicated servers in mind have a certain kind of longevity, offering persistent game worlds and longtime player engagement with full control over modifications. Playing online matches 1vs1 in a Beat ‘em up clearly sounds more fitting for a P2P approach.

In the end, it’s clear that both technologies have their advantages and disadvantages. Finding online threads with increased hostility to one technology or the other does merely take a look into your preferred search engine. Our goal is not to advertise dedicated servers as the only way to “do multiplayer” but emphasize the multifunctional, “Swiss army knife”-power they provide over the initial “low cost, high flexibility” of Peer-to-Peer online gaming. As a developer, you have to keep these factors in mind when designing your next sandbox survival, online shooter or racing game.

Sandbox is the correct word to finish this article, pointing to our next topic ─ less technical and with many blocks and bits involved. We’re going to take a close look at Minecraft, the game that still represents safe and never ending fun, played by almost everybody from 6 to 99 and above. It was and still is a milestone game that deserves another look into its history and (ongoing) development, speaking about the influences it has on video games and hosting companies alike.